Dear finance, this is why cloud costs are complex

- November 30, 2020

- 3 min read

This scenario keeps repeating: Finance get an AWS bill, it’s over budget, rightfully they are surprised and come to the Engineering team asking why. After it has been resolved, they want to address the root cause: can we work together to set better budgets so we can all plan and be prepared. This blog sheds some light on why this is a complex task.

Side note: I have to write a blog about why Engineering should also know about Finance - did you know that there can be tax implications when you tag your cloud resources?

Task: How much will it cost to store a 1 GB file in our AWS account?

First we need to choose which cloud service we would like to use, AWS has “175 fully featured services”. Let’s assume we’d like to use AWS S3 for this use case. Next we need to choose which type of S3 product we’d like to use. There are 6 different types depending on how frequently you need to access the file; and depending on how much storage we already use, there are 14 different price points. The next question is where would you like the file to be hosted? There are 21 AWS regions to choose from (2 more for Government Cloud). So far, we have 14 prices x 21 regions = 294 different price points.

That’s probably enough to demonstrate the complexity of cloud pricing, but lets go a little deeper. Next, we have to think about how many times this file will be accessed; if it will be accessed from within the same region or outside the region; what about doing analysis tasks on the folder containing all the files, and finally what about replication of the data. The total number of price points comes to 2,721.

I have chosen a relatively simple example here. AWS EC2 has 1,716,582 price points, of which 1,611,573 prices are the actual compute instances.

So far, we’ve focused on the price points from AWS S3, but that’s rarely used by itself. It is common to use S3 with a compute service such as AWS Lambda, to process the data, and it’s common to use Lambda with an API Gateway that acts as a “front-door” for applications to access the compute service, CloudWatch for logging, and either RDS or DynamoDB for record keeping. You can see how, at best it’s very time consuming, and at worst pretty much impossible, to give an answer to the question by manually trying to calculate it.

Why do I know the exact pricing point numbers? Because we created price mappings to develop Infracost.io. Infracost takes Terraform files (Infrastructure as Code), and provides a cost estimate for developers so the cost is better understood before launching or changing infrastructure. We believe giving developers and DevOps easy access to pricing information will enable them to make better decisions at design time, ultimately reducing waste in cloud spend.

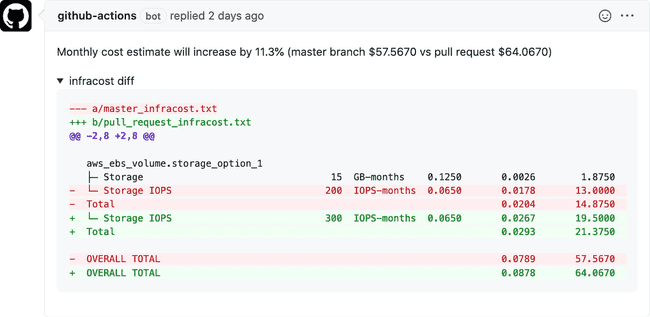

Infracost does all the complex cost calculations, and leaves a simple comment in your pull request with the cost implications:

Infracost.io is free and open source. We are seeing more companies add the tool into their CI pipelines so all developers get easy and quick access to cloud pricing information. If you would like to help us get there, please join us on GitHub, try it and start contributing. See you there!